- Welcome to our new member: A warm welcome to Cris, a new postdoc from the Biological psychology lab funded under the Hearing4All.connect project. His research explores how the brain processes uncertainty, specifically looking at how hearing loss makes sensory information less reliable. He aims to test how this uncertainty affects the way people value and choose to engage in social interactions. We are looking forward to our discussion about open science and potential collaboration projects in OSIG.

- Multiverse analysis talk at Charité Berlin: Cassie recently gave a talk at Charité Berlin titled 'Exploring the Multiverse: Transparency, Uncertainty, and Robustness in Data Analysis'. Presented as part of the QUEST Seminar on Responsible Research. By systematically evaluating all defensible analysis pipelines, this method reports the robustness of results, making analytical uncertainty transparent. Catch up on the materials here.

- Open science 101: Cassie and Micha led an Introduction to Open Science session during the department colloquium. They covered core components like open data, open code, open materials, open access, and preregistration. Materials will be coming soon via a new OSIG resources repository.

- GRN ReproducibiliTEA journal club: Sumbul led our very first GRN ReproducibiliTEA journal club in December 2025; originating at the University of Oxford in 2018, ReproducibiliTea is a grassroots initiative that has expanded to over 100 institutions to create informal spaces for discussing research transparency over tea. We kicked things off by discussing “FAIRly Big: Reproducible Large-Scale Data Processing” (Wagner et al., 2022, Scientific Data), focusing on reproducible processing for large datasets using DataLad and containers. The session prompted a great discussion on privacy constraints, infrastructure limits, and real-world computational reproducibility. It was exactly the open, curious, and constructive environment we hoped to build. Students, doctoral researchers, and staff are warmly invited to join future sessions. Keep an eye on the mailing list for dates, and feel free to suggest papers or host a session. See you at the next tea (schedule coming soon)!

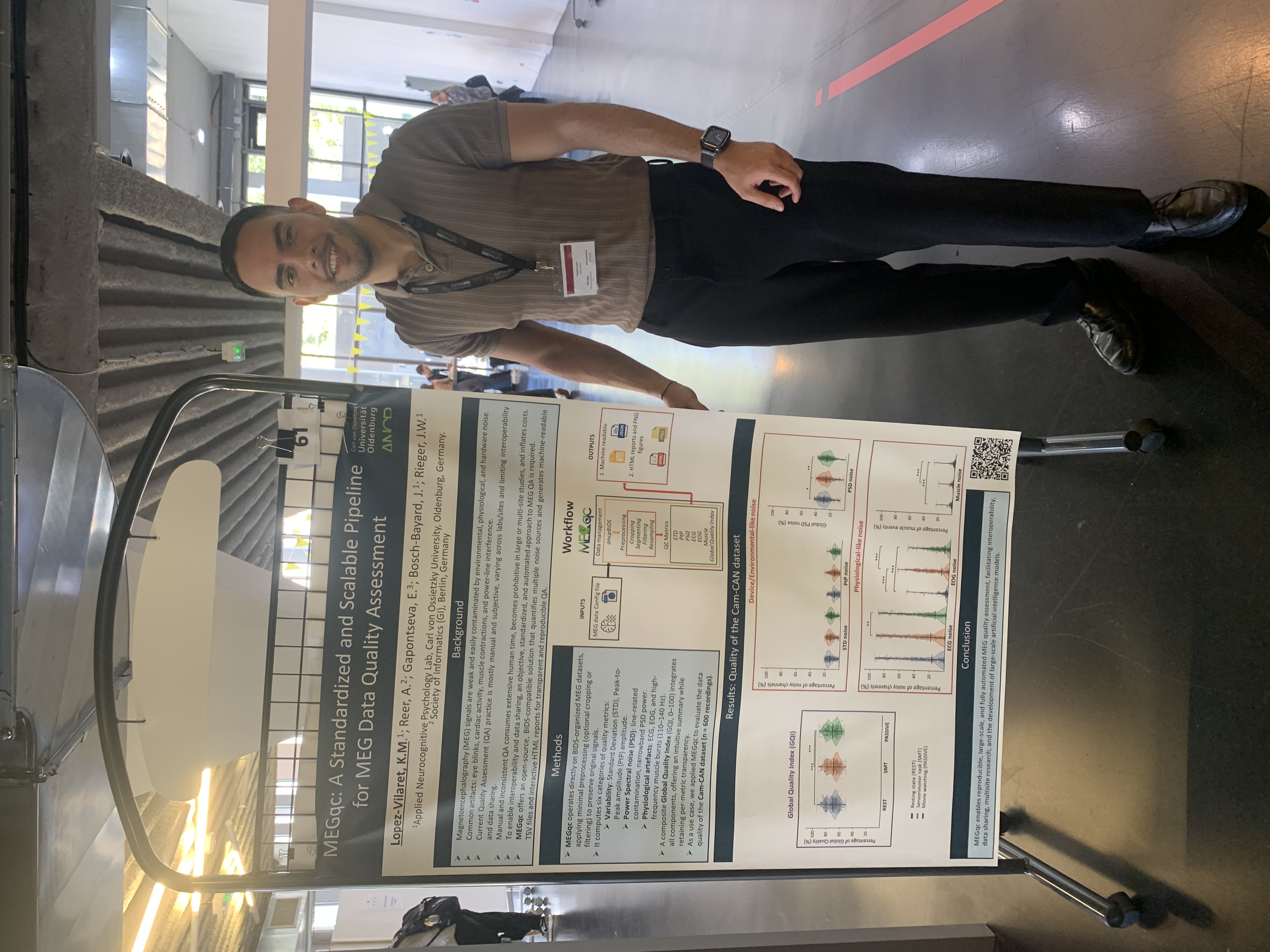

- PracticalMEEG workshop: Karel ran a workshop at PracticalMEEG 2025 covering MEGqc, a new open-source pipeline designed for fast, standardized, and fully reproducible quality assessment. The materials are available here and here.